PBX traffic load is generally measured in 100 call-second units known as Centum Call Seconds (CCS). Centum from Latin, signifies 100. The maximum traffic load per station user during the Busy Hour is equal to 36 CCS, which is a shorthand method of stating 3600 seconds. Thirty-six CCS is equivalent to 60 minutes, or 1 hour of traffic load. A station port (telephone, facsimile terminal, modem, etc.) that “talks,” or connects, to the switch network for 10 minutes during 1 hour has a traffic rating of 6 CCS (10 minutes = 600 seconds = 600 call-second units). Combining the station user traffic load with an acceptable GoS level results in the following station user traffic requirement: 6 CCS, P(0.01). This notation signifies that a station user with an expected 6 CSS traffic load is willing to accept a 1 percent probability of call blocking when attempting to use the switch network. A 2 percent blocking probability would be expressed as 6 CCS P(0.02); a 0.1 percent blocking probability would be expressed as 6 CCS P(0.001).

A traffic rating of 36 CCS P(0.01) is used for station users who require virtually nonblocking switch network access. A 36 CCS traffic load is a worse-case situation because it is the maximum station user traffic load during the Busy Hour. The usual station user traffic rating requirement is about 6 to 9 CCS, P(0.01). Although a station user might be on a call that lasts for 1 hour or more—a 36 CCS traffic load—there is a very small probability all station users are simultaneously engaged in calls of at least 1 hour during the same Busy Hour. It is far more likely that an individual station user will have a 0 rather than a 36 CCS, traffic load during Busy Hour because that person may be in a meeting, traveling, on vacation, or too busy with paperwork to take or place telephone calls. Even if a station user makes several calls per hour, it is possible that each will be of short duration because many calls today are answered by a VMS with limited available time to leave a message. Most business-to-business calls today are connections between a station user and a VMS, and each of these calls typically last for less than 2 minutes and many last for less than 1 minute. An increasing number of callers no longer leave messages; they disconnect and send an e-mail.

The total PBX station traffic load during Busy Hour is simply the sum of the individual station user traffic requirements. If ten station users are connected to the network for 10 minutes during the same hour, the total traffic load on the switch network would be 60 CCS (10 station users × 10 minutes/station user, or 10 × 6 CCS). If the probability of blocking level was 1 percent, the traffic requirement would be noted as 60 CCS P(0.01). The total PBX station traffic load is rarely calculated, however, unless the switch network design is based on a single TDM bus or switch matrix. PBX traffic loads are better calculated for groups of station users sharing access to the same switch network element, assuming station users with similar traffic requirements are grouped together.

For switch network traffic engineering calculations, most customers use an average traffic load estimate to represent all station users instead of segmenting the station user population into like traffic load requirements. It is recommended that a different approach be used to traffic engineer a PBX system. Station users should be segmented into different traffic rating groups to ensure that switch network resources are optimized for each category of station user. In every PBX system there are some station users with very high traffic rating requirements, such as attendant console operators. Other station port types with very high traffic rating requirements include ACD call center agents, group answering positions, voice mail ports, and IVR ports. Each station port typically will have a 24 CCS traffic load, although customers usually prefer these ports to have nonblocking [36 CSS P(0.01)] switch network access and state so in their system requirements. Averaging the high traffic, moderate traffic, and low traffic station ports will result in a traffic engineered system that blocks an unacceptable percentage of calls for attendant positions because a rarely used telephone in the basement is using switch network resources instead of more important user stations.

As an example, a Nortel Networks Meridian 1 Option 81 C, based on a 120 talk slot Superloop local TDM bus design and a port carrier shelf that can typically support 384 ports, should be configured as follows to satisfactorily support the following station user traffic groups:

-

A maximum of 120 very high traffic station users, 36 CCS, P(0.01): stations configured on a single port carrier shelf supported by a dedicated Superloop bus

-

About 250 moderate traffic station users, 9 CCS, P(0.01): stations configured on a single port carrier shelf supported by a dedicated Superloop bus

-

About 500 low traffic station users, 4 CCS, P(0.01): configured across two port carrier shelves supported by a dedicated Superloop bus.

A single Superloop bus can adequately support each traffic group in this example, although the number of station users differs across the group categories. If the maximum number of potential traffic sources, or station users, is no larger than 120, then the Superloop bus is rated at 3,600 CCS, P(0.01). This is the maximum traffic handling capacity of a Superloop bus. The Superloop bus is rated at slightly less than 3,000 CCS, P(0.01), if the port carrier shelf is configured for about 256 station users, according to the original Meridian 1 documentation guide. If the number of potential traffic sources increases, then the traffic handling capacity decreases for a given probability of blocking level. The exact traffic rating for a specific number of station users is available with the use of a computer-based Meridian 1 configurator. Figure 1 illustrates CCS traffic handling capabilities of a Meridian 1 Superloop with 120 available talk slots. Customers with very high traffic requirements can configure a single Meridian 1 IPE shelf with up to four SuperLoops. Each SuperLoop is dedicated to four port card slots. Figure 2 illustrates how a port carrier shelf can be segmented.

Traffic handling capacities for any PBX system local TDM bus are comparable in concept to Meridian 1 Superloop bus ratings:

-

If the number of potential traffic sources is smaller than or equal to the number of available talk slots, then station traffic can be rated at 36 CCS (nonblocking switch network access).

-

If the number of potential traffic sources is larger than the number of available talk slots, then the station traffic rating is less than 36 CCS. The traffic rating will decrease as the number of potential traffic sources increases.

The traffic handling capacity of the local TDM bus declines according to an exponential equation used to calculate probability of blocking levels. Most PBX designers assume a Poisson arrival pattern of calls, which approximates an exponential distribution of call types. The exponential distribution is based on the assumption that a few calls are very short in duration, many calls are a few minutes (1 or 2 minutes) in duration, and calls decrease exponentially as call duration increases, with a very small number of calls longer than 10 minutes. The actual traffic engineering equations (based on queuing models), call distribution arrival characteristics, and station user call attempt characteristics determining the local TDM bus traffic rating at maximum port capacity (if switch network access is not nonblocking) are known only to the PBX manufacturer.

Regardless of the actual traffic engineering equation used by the manufacturer, the calculated traffic rating will be based on three inputs:

-

Potential traffic sources

-

Available talk slots

-

Probability of blocking

A basic assumption used for most traffic analysis studies is a random (even) distribution of call arrivals during the Busy Hour. Traffic analysis studies also must make an assumption about call attempts that are blocked:

-

Station users who encounter an internal busy signal on their first call attempt continue making call attempts until they are successful.

-

Station users who encounter an internal busy signal on their first call attempt will not make other call attempts during a certain period.

In reality, station users who receive a busy signal will immediately redial. The assumption that a station user will not make another call attempt, if the first attempt is unsuccessful, is not realistic. The redial scenario is the assumption used by Poisson queuing model studies. The Poisson queuing model assumes that blocked calls are held in the system and that additional call attempts will be made until the caller is successful. For this reason, Poisson queuing model equations are commonly used by PBX traffic engineers to calculate internal switch network traffic handling capacities.

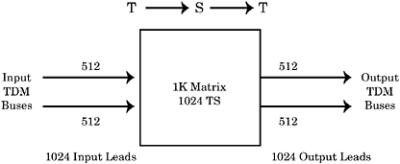

PBX systems with complex switch network designs (multiple local TDM buses, multitier Highway buses, center stage switch complexes) are far more difficult to analyze and traffic engineer than small PBX systems with a single local TDM bus design. Large, complex PBX switch network designs can provide a traffic engineer with many different switch connection scenarios that must be analyzed. Switch connections across local TDM buses require analysis of at least two switch network elements per traffic analysis calculation. To simplify traffic engineering studies, it is common system configuration design practice to minimize switch connections between different TDMs by analyzing call traffic patterns among stations users and providing station users access to trunk circuits on their local TDM buses. Centralizing trunk circuit connections may facilitate hardware maintenance and service, but it degrades system traffic handling capacity if more talk slots are used per trunk call.